Retrieval-Augmented Generation (RAG) changed how teams ground LLMs in their own data, but classic vector search still struggles with multi-hop questions and global context. GraphRAG fixes that by adding a knowledge graph layer on top of retrieval. Microsoft Research reports up to 80% accuracy on complex queries versus around 50% for traditional RAG—a 3x lift that explains why GraphRAG has become one of the hottest patterns of 2026.

This guide breaks down what GraphRAG is, how the indexing and query flow works, when it beats vector RAG, and exactly how to build a production pipeline.

What Is GraphRAG?

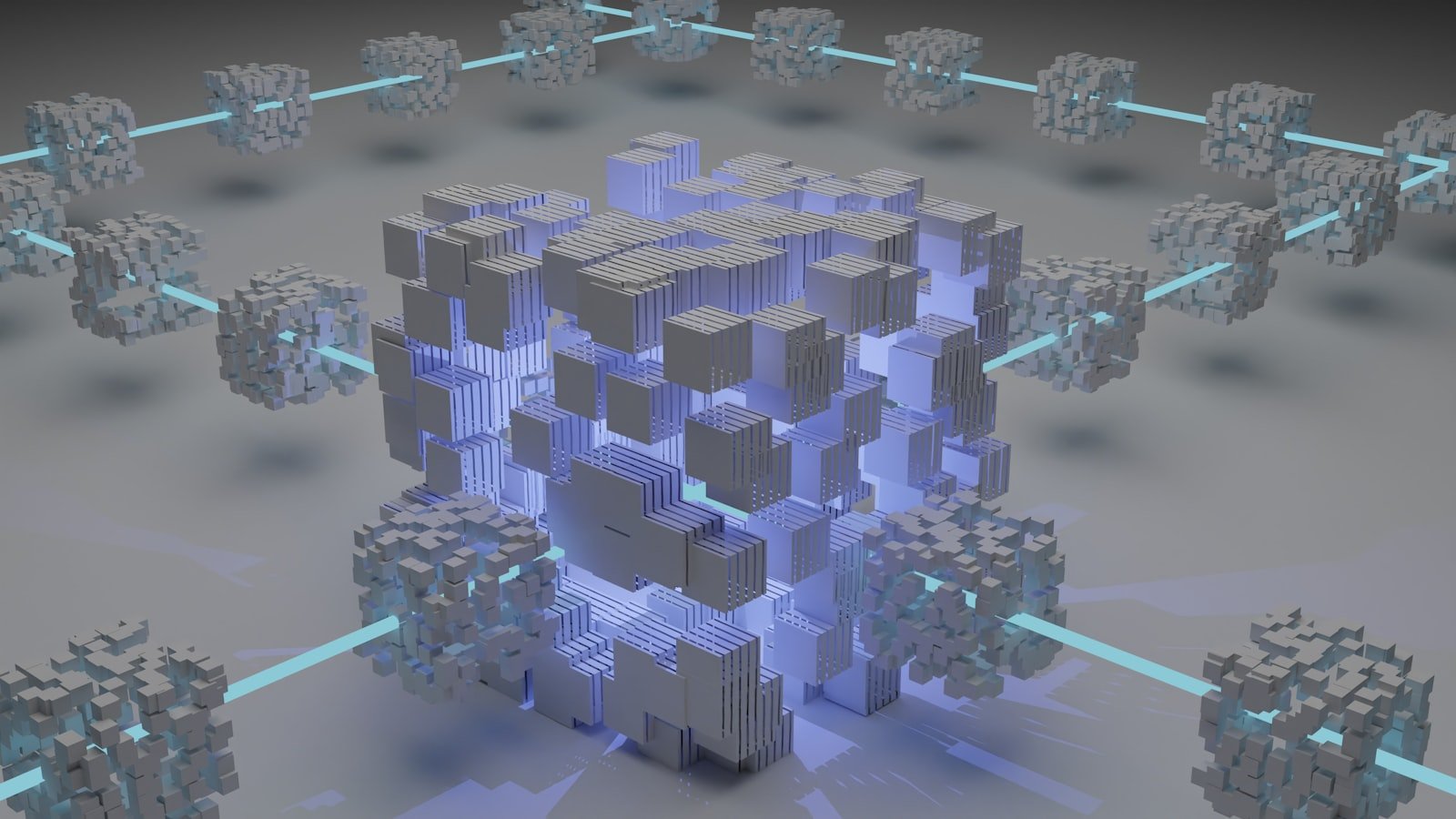

GraphRAG is a retrieval architecture that combines a knowledge graph with vector search to answer questions an LLM otherwise can’t reason across. Introduced by Microsoft Research in 2024, it constructs a graph of entities (people, products, concepts) and relationships from a text corpus, clusters that graph into “communities,” and then retrieves both passages and graph context at query time.

In plain terms: classic RAG finds the chunks most similar to your question. GraphRAG finds the concepts and connections most relevant to your question—and the chunks attached to them.

How GraphRAG Works

GraphRAG runs in two phases: an offline indexing pipeline that builds the graph, and an online query pipeline that uses it.

Indexing Phase

The indexing pipeline does five things:

- Chunk the corpus by semantic boundaries (paragraphs, sections), not fixed token windows.

- Extract entities and relationships from each chunk using an LLM, producing typed triples like

(Anthropic, builds, Claude). - Build the knowledge graph by merging duplicate entities and aggregating edge weights.

- Detect communities with the Leiden algorithm, grouping closely connected nodes into clusters.

- Summarize each community with an LLM, producing hierarchical summaries from fine-grained to high-level.

The output is a queryable artifact: nodes, edges, embeddings on chunk text, and natural-language summaries at every level of the hierarchy.

Query Phase

GraphRAG supports two query modes:

- Local search answers entity-specific questions by retrieving the entity’s neighborhood, related chunks, and adjacent communities.

- Global search answers thematic, “what does the corpus say about X” questions by map-reducing across community summaries.

The right answer depends on the question. “Who funded Anthropic in 2024?” is local. “What are the dominant safety themes across the corpus?” is global.

GraphRAG vs Traditional RAG

Vector RAG is fast, cheap, and excellent for fact lookup. It breaks down on three classes of queries:

- Multi-hop questions that require chaining facts (“Which board members of company X also sit on the boards of its competitors?”).

- Global questions that ask for themes spanning the whole corpus.

- Disambiguation when the same entity appears under different names.

Microsoft’s published benchmarks show GraphRAG hitting 72–83% comprehensiveness on global questions versus 50–60% for vector RAG, with a 3.4x improvement on enterprise-style benchmarks.

The trade-off is cost. Indexing is dramatically more expensive because every chunk goes through an LLM for entity extraction. For a 1M-token corpus, plan for 5–10x the indexing spend of vector RAG.

When to Use GraphRAG

Reach for GraphRAG when:

- Your queries are exploratory or thematic, not lookup-style.

- The corpus has rich entity structure (legal contracts, research literature, regulatory filings, internal wikis).

- You need explainability—the graph makes “why did the model say this” trivially auditable.

- Multi-hop reasoning matters and you’ve already tried reranking.

Stick with vector RAG when latency is critical, the corpus is small, or queries are simple FAQ-style. For mid-complexity retrieval, reranking with cross-encoders is often a cheaper win than adding a graph.

How to Build a GraphRAG Pipeline

Here’s the minimum stack to get from raw documents to answered queries:

- Pick a graph store. Neo4j is the default. Memgraph, Kùzu, and AWS Neptune are viable alternatives. Some teams store the graph in their existing vector database using property graphs.

- Pick an extraction model. GPT-4o-mini, Claude Haiku 4.5, or Llama 3.3 70B all work. Cost dominates here, so use the smallest model that hits your extraction F1 target.

- Run Microsoft’s GraphRAG library (

pip install graphrag) or LlamaIndex’sPropertyGraphIndex. Both ship indexing pipelines you can configure end-to-end. - Tune chunk size and entity types. Domain-specific entity schemas beat generic ones. For legal text, define entity types like Party, Clause, Obligation before extraction.

- Choose your embedding model. Pair the graph with a strong embedding model—see our 2026 embedding model comparison for picks.

- Wire up local + global query routes. Classify the user’s question first, then dispatch to the right retrieval mode. A simple LLM classifier is enough.

Test rigorously. GraphRAG accuracy depends heavily on extraction quality, so build an eval set of multi-hop questions before you scale.

Tools and Frameworks Worth Knowing

- Microsoft GraphRAG — the reference implementation. Best for understanding the architecture; production teams often fork it.

- LlamaIndex PropertyGraphIndex — first-class graph support, easier ergonomics than Microsoft’s library.

- Neo4j GraphRAG — tightly integrated with Neo4j, includes vector + graph hybrid retrievers.

- Haystack 2.x — composable pipelines that mix retrievers, graph stores, and rerankers.

For agentic workflows that combine GraphRAG with planning loops, see our breakdown of Agentic RAG patterns.

FAQ: GraphRAG in 2026

Is GraphRAG just hype?

No. The accuracy gains on multi-hop and global questions are reproducible, but the cost trade-off is real. It’s a tool, not a default.

Can GraphRAG eliminate hallucinations?

It reduces them substantially because retrieved context includes typed relationships, not just unstructured text. Grounding still matters—pair it with the techniques in our hallucination guide.

Do I need a dedicated graph database?

For prototypes, no—NetworkX in memory works. For production with millions of nodes, yes. Neo4j or Memgraph are battle-tested.

How much does GraphRAG cost to run?

Indexing dominates the bill. Expect 5–10x the LLM spend of vector RAG for the first index, then near-zero for incremental updates. Query-time cost is comparable to RAG plus one extra LLM call for community summarization.

Conclusion: Should GraphRAG Be in Your Stack?

GraphRAG isn’t a silver bullet, but it’s the right answer when your users ask questions vector search wasn’t designed for. The architecture pays off most in domains with dense entity relationships and exploratory query patterns. Start with Microsoft’s reference library, build a small eval set, and only then decide whether the indexing cost justifies the accuracy lift.

Ready to ship smarter retrieval? Pick a corpus this week, run the GraphRAG indexer, and benchmark it against your current RAG setup. The data will tell you whether GraphRAG belongs in your stack.