What Is the Model Context Protocol?

If you have ever wished your AI assistant could pull live data from a database, trigger an API call, or read files from your cloud storage without clunky workarounds, the model context protocol is the answer. Introduced by Anthropic in late 2024, MCP is an open standard that gives large language models a universal plug to connect with external tools, data sources, and services.

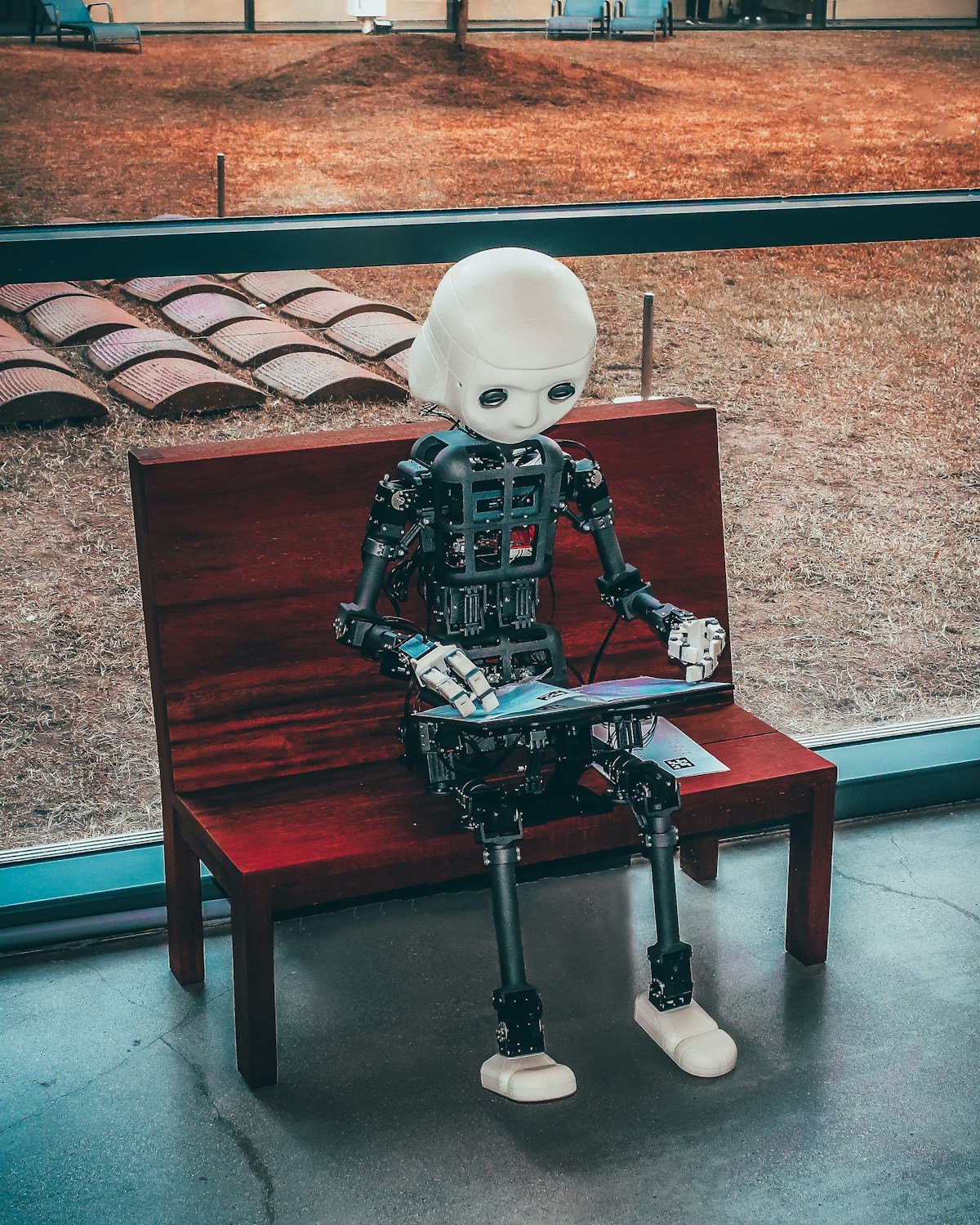

Think of it as USB-C for AI. Before MCP, every integration between an LLM and an outside system required a custom connector. Developers maintained dozens of one-off adapters that broke with every API change. MCP replaces that mess with a single, standardized protocol: build one server, and any MCP-compatible model can use it.

By March 2026, Anthropic reported over 10,000 active public MCP servers and 97 million monthly SDK downloads. OpenAI, Google, Microsoft, and AWS have all adopted the standard, making it the de facto way AI applications talk to the outside world.

How the Model Context Protocol Works

MCP uses JSON-RPC 2.0 messages to establish stateful communication between three layers:

- Host — The LLM application the user interacts with, such as an AI-powered IDE or a chat interface like Claude Desktop.

- Client — A lightweight connector inside the host that translates the LLM’s requests into MCP messages and routes responses back.

- Server — An external service that exposes capabilities (data, tools, or prompt templates) in a format the LLM can understand.

When a user asks the AI to “check my latest sales numbers,” the host tells its MCP client what it needs, the client sends a JSON-RPC request to the appropriate MCP server, and the server queries the database and returns structured results. The entire round trip happens in milliseconds, and the LLM never needs custom code for that specific database.

Three Core Primitives

Every MCP server exposes capabilities through three building blocks:

- Resources — Read-only access to structured data. An MCP server for Google Drive, for example, can let the LLM browse and read documents without the ability to modify them.

- Tools — Executable functions that perform side effects such as sending an email, writing to a database, or calling a third-party API.

- Prompts — Reusable templates and workflows that standardize common interaction patterns, reducing repetitive prompt engineering.

This separation of concerns keeps the protocol clean. A server can offer just resources (safe, read-only), just tools (action-oriented), or a combination of all three.

Why MCP Matters for Developers in 2026

The rise of AI agents has made MCP essential rather than optional. Agents need to chain multiple tool calls, maintain state across steps, and interact with real-world systems reliably. Without a shared protocol, every agent framework reinvents its own tool-calling layer, leading to fragmentation and wasted effort.

MCP solves this by offering a single integration surface. If you build an MCP server for your internal ticketing system, it works with Claude, ChatGPT, Gemini, and any open-source model that supports the standard. That write-once-run-everywhere promise is why adoption has been so fast.

For Java full-stack developers, MCP servers are straightforward to implement. The official specification is language-agnostic, and community SDKs exist for Java, TypeScript, Python, Go, and Rust. A typical Java MCP server is a lightweight HTTP service that exposes a JSON-RPC endpoint — a pattern most backend engineers already know.

Real-World Use Cases

MCP is already powering production workflows across industries. Here are some of the most common applications:

- Code assistants — IDEs like Cursor and VS Code use MCP to let AI read project files, run linters, and execute tests without leaving the editor.

- Customer support — Support bots connect to CRM and ticketing MCP servers to look up customer history, create tickets, and escalate issues automatically.

- Data analysis — Analysts ask their AI assistant to query a Postgres or BigQuery MCP server, then visualize the results in a single conversation.

- DevOps automation — MCP servers for Kubernetes, AWS, and CI/CD pipelines let engineers manage infrastructure through natural language commands.

- Enterprise search — MCP servers for Confluence, Notion, and SharePoint give AI unified access to company knowledge bases.

Security Considerations

Because MCP servers can execute arbitrary code and access sensitive data, security is a first-class concern in the specification. The protocol mandates explicit user consent for every tool invocation and data access request. Hosts must present clear authorization flows so users understand exactly what they are approving.

In enterprise deployments, teams typically add an authentication layer (OAuth 2.0 or mutual TLS) in front of their MCP servers and restrict which tools are available to which users. The official specification provides detailed implementation guidelines for building secure MCP integrations.

How to Get Started with MCP

Getting started with the model context protocol is easier than you might expect. Follow these steps to build your first MCP server:

- Pick your SDK — Choose the official SDK for your language. TypeScript and Python have the most mature libraries, but the Java and Go SDKs are production-ready in 2026.

- Define your capabilities — Decide whether your server will expose resources, tools, prompts, or a mix. Start simple with one or two tools.

- Implement the JSON-RPC endpoint — Your server needs to handle

initialize,tools/list, andtools/callmethods at minimum. - Test locally — Use the MCP Inspector tool or connect your server to Claude Desktop for quick testing.

- Deploy and register — Host your server behind HTTPS, add authentication, and optionally publish it to an MCP registry for others to discover.

The entire process can take as little as an afternoon for a simple tool server. The official MCP documentation includes step-by-step tutorials and example servers to help you get started.

Frequently Asked Questions

What is the model context protocol used for?

The model context protocol is used to connect LLMs with external data sources and tools through a standardized interface. It allows AI applications to read files, query databases, call APIs, and perform actions in third-party services without requiring custom integration code for each system.

Is MCP only for Anthropic’s Claude?

No. While Anthropic created MCP, it is an open standard that any AI provider can adopt. As of 2026, OpenAI, Google, Microsoft, and AWS all support MCP, along with dozens of open-source LLM frameworks. Any MCP server works with any MCP-compatible client.

How is MCP different from function calling?

Function calling is a feature built into specific LLM APIs that lets the model request a function execution. MCP is a protocol layer that sits above function calling and standardizes how tools are discovered, described, and invoked across different models and hosts. MCP makes tool integrations portable, while function calling is provider-specific.

Can I build an MCP server in Java?

Yes. Community-maintained Java SDKs are available, and since MCP is just JSON-RPC over HTTP or stdio, you can implement it with any HTTP framework like Spring Boot or Quarkus. The protocol is language-agnostic by design.

Conclusion

The model context protocol has quickly become the backbone of AI-tool integration in 2026. With nearly 100 million monthly SDK downloads, support from every major AI provider, and a growing ecosystem of over 10,000 public servers, MCP is no longer experimental — it is infrastructure.

Whether you are building AI agents, integrating LLMs into enterprise workflows, or just want your chat assistant to do more than generate text, understanding MCP is now a core skill. Start with a simple server, connect it to your favorite AI host, and experience the difference a standardized protocol makes.

Want to stay updated on AI and LLM developments? Explore more articles in our Technology section for the latest guides and tutorials.